Sample rate, non-oversampling and oversampling

We know that in the real world, music is continuous, the image is continuous. TV or film, in fact, is composed of a static picture of the screen, the static picture of the rate of rapid switching, the human eye to see a continuous picture. So, how fast the continuous picture? Is a second 50, as long as this rate, the human eye will not be aware of the intermittent switching between the screen, of course, a second 50 is a minimum requirement, if you can do more faster, it can be Improve the visual effects. For audio, it is the same concept, sound information, as long as the intermittent information is enough, they can be interpreted as a continuous ear music. So, the human ear how much intermittent information can be interpreted as continuous information? Let’s first talk about the human ear can hear the frequency range, 20-20000 Hz. So, if you want to reflect the highest frequency of 20K, then how much intermittent information is needed? Here, I need to introduce a proper noun “Nyquist frequency”, the specific principle we do not need to go into here, we only need to know the results on it. As a result, at least twice the “intermittent information” of 20K is required to express a frequency of 20K. Here, we will be a “intermittent information” as a sampling point. In other words, If we have to be able to reflect the 20K audio, you need at least 40K a second sampling point. So, we come back to see the standard CD, CD standard is 16bit / 44.1Ksps. In this article, we will not explain what is 16bit, which in the follow-up article I will explain. The 44.1Ksps is the sampling rate, we can see, from the theoretical minimum of 40K, only about 10% more margin, a little reluctantly.

In the CD has just appeared, there is no oversampling technology, but as people found that the sampling rate of 44.1K is not enough, how to solve the problem? So over-sampling technology was born. So what is oversampling? Simply put, because 44.1K sampling rate is too reluctantly, if there is a higher sampling rate is bound to have a better effect. However, we recorded in the CD information only 44.1K a second, how to become 88.2K, 176.4K or even higher? This needs to do the interpolation operation, through the mathematics method, calculates the missing sampling point, then insert to original sampling points.

In the oversampling operation, an integer multiple of oversampling is the easiest to do, not prone to problems. Therefore, we should ensure that the oversampling is an integer multiple, if not an integer multiple, such as 44.1K oversampling to 48K, which requires higher computing resources, very prone to problems, it should try to avoid this situation appear.

Today, digital audio in the oversampling of the road has farther and farther, 4 multiplier, 8 multiplier is not surprising, 16 multiplier, 32 multiplier or even higher, this is the mainstream trend. If it is 32 multiplier, then only 1 of 32 sampling points, is from the original record information, and the remaining 31 sampling points are derived from the interpolation calculation. So, can we really trust the 31 sampling points from interpolation? This is what we need to discuss the following topic.

Have to say, the mainstream audio technology, and even designers, have confidence in the 31 calculated points of the sample. But the real fact is that audio-specific applications are not suitable for use with oversampling technique. Now more and more people are aware of this problem and found that they had previously believed in things, in fact, there is a problem. Yes, we are now in the process of negating the current mainstream technology. It is like China has finally realized the importance of environmental protection, began to cut a lot of high-polluting industries, although those highly polluting industries have brought us wealth, but we now pay more attention to the environment, about wealth, we can use other methods.

The advantages of oversampling

Before talking about the problems of oversampling, let’s talk about the benefits of oversampling. Audio technology industry are not all fools, If there no good things, no one will be interested, how it will be developed till today?

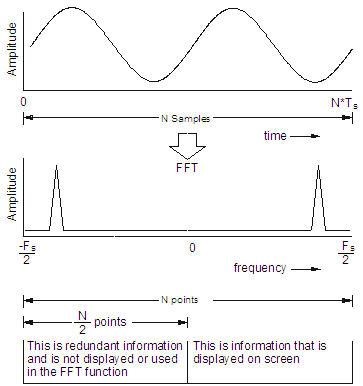

First of all, If we analyze the spectrum rate of a signal with sample rate of ‘F’, Then will have a center in F / 2, the left and right part of spectrum is mirrored at this center point, assuming a 1K audio signal at 48K sampling rate, then, in the spectrum, you can see that there is a signal at 1K, also have another signal at 47K.

Therefore, we can see, half of 48K is 24K, 24K as the center, 1K signal and 47K, is a mirror image relationship. Similarly, if a 5K signal, there will be a 43K of the mirror signal.

The frequency of these mirror images are distributed in the 24K to 48K, although the human ear can not hear more than 20K frequency, it can be ignored. But if the following amplifier is not ideal, two different frequencies may cause intermodulation distortion (IMD) to produce a new frequency falls within the audio range. Therefore, in order to eliminate the hidden troubles, reduce the demand for the following amplifier, these signals outside the audio bandwidth should better to filtered out. Then the problem is that the analog filter, if you want to protect the 20K bandwidth in the amplitude and phase to be flat, but also filter out those very closed 24K-48K signal, which becomes impossible, although a high-order analog filter can be very steep cutting, but it has great impact on the audio bandwidth inside 20KHz, the result is not worth the candle.

The other advantages of digital filters is that they can be perfected in the frequency domain. Digital filters can be used for oversampling and filtering easily. For example oversampling 4X times, 48K * 4 = 192K. 192K half is 96K, then 0-20K audio signal to 96K as the center of the mirror image is 176K-196K. Which has been opened in the middle of the buffer zone is large enough to allow the analog filter to work well.

The Audio Engineering Society has specified a measurement standard for DAC and digital amplifier measurements, called AES-17, which requires a complete filtering off signals outside the 20K audio range to avoid problems affecting measurements. If the DAC is over-sampling, even if not in accordance with the standard will not have any impact, because the out-band of the signal has been filtered out off very clean. However, if the non-oversampling DAC, if not in accordance with this standard will cause the measurement results will be wrong.

In addition, in the high frequency part, if the oversampling processing is not performed, the amplitude of the sample will be attenuated due to the lack of samples. If at 48K sampling rate, signal at 20K will be 2-3db attenuation. Over sampling process will not have this problem.

The problems of oversampling

As you can see, oversampling is really a very good technology and it solves a lot of problems. It can be said that, based on the digital filter, over-sampling technology is perfect at frequency domain, even the very simple digital filter, the performance in frequency domain is awesome. Well, then, we have to talk about is the problem of oversampling, which is why we want to reject this technology.

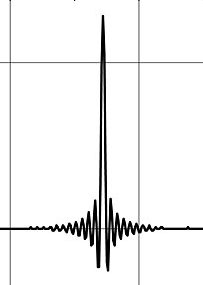

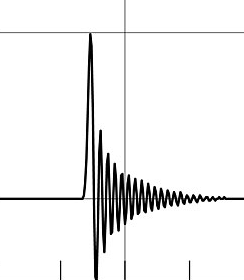

First, oversampling will have a ringing artifact. A pulse signal without oversampling should be as shown in Figure 1, but the oversampling technique we are using now leads to distortion in Figure 2, or Figure 3.

Figure 1 Figure 2 Figure 3

In Figure 2, we see that when a signal is transformed, it produces some ringing before and after the transform. There is some new technology which can result in Figure 3, which can effectively remove the pre-ringing, but the post-ringing will become quite large.

From a musical point of view, the pre-ringing is like there is something came out before a note should appeared, this will cause some oddly feeling. Post-ringing is like echo, resulting in some additional reverb, the playback environment will usually have a certain reverb, for example, if we in the room, the sound is from multiple souces. First, the sound source generate Direct sound to you ear, then there sound will reflect on the wall then reach to you ear, and some are reflected several times before it reaches to your ears. It should be noticed, the post-ringing in Fig. 3 is much bigger than in Fig. 2, so when it reaches that level, you will feel the sound is lagged and the transient will be poor. In addition, the post-ringing should disturb the original room reverberation, resulting in the positioning of the instrument in the sound field has also been a problem. The reverberation mode of a room is fixed, but the post-ringing generated by oversampling is subject to change all the time. So the sound field will be confused, the position of instruments are flying. From the listen experience point of view, I personally think that Figure 2 has smaller problem than Figure 3.

If from the waveform you can not directly understand how the ringing artifacts can affect the listening experience. The following picture may be more intuitive to understand, because the ringing effect also exists in the image processing.

The left is the original image, the right is ringing artifact. Maybe some people will think that the right is the high-resolution details, edge sharpening will highlight some of the lines, but at the same time the loss of delicate texture and natural transition. Have you noticed behind this men, there is a men in black, he is watch back. In the original image, we hardly notice that person, but in the right image, it is much easier to noticed. So, as an audiophile, which do you like?

Why the above problems look so bad, but still haven’t been noticed as a big problem for a very long time? Because the audio industry using spectrum analysis to measure various standard specs. The digital filter which we used for over-sampling, has perfect performance in frequency domain. But in the time domain, it is very bad. In an easy word, the frequency domain is equivalent to the content, time domain is equivalent to the order.

For example, the following statement, the content is complete,

- Little monkey can not find the father and mother, very anxious.

- Little monkey can not find the father, and mother very anxious. (A small change in the order, but has caused ambiguity)

- Dad can not find mother, little monkey very anxious. (Greater variation in order, greater ambiguity)

- Dad not find can little, very mother monkey anxious. (More chaotic order, although the content is still complete, but has no meaning)

Moreover, the missing information, whether the mathematical calculations can be very good to calculate the missing information and make up for it?

Here, we first talk about people’s vision and hearing, eyes and ears is just a pick up information organ, the information is transmitted to the brain, the brain has a special part responsible to interpretation of these informations. The brain is very powerful of these analysis Functions, Our modern science, even with a super computer, can not compete with a brain. How brain can analyze these information so powerful, is still a myth to our modern science.

As a visual example, we know that we need two eyes to form a three-dimensional vision, that if you try to close one eye, you will experience some impact, but not very big, you can still know how far the objects is away from you. You can still have daily life without much impact. The 2D information capture with one eye only, after the analysis of brain, can easily make up three-dimensional information which the original information do not have.

As an example of hearing, we know that we have only two ears, in a horizontal line. So, we distinguish left and right in the sound field is very easy to understand, because the two ears are in a horizontal line. But why can we tell if the sound is coming from up side or down side? This is also because of the brain has done some very complex calculation, and make up those vertical information which was not able picked by two horizontal ears.

Above these two examples, we can not use modern science to achieve, even if we ignoring the cost. But the human brain can achieve it like a piece of cake.

If there is a very simple sentence

1234567

The middle one is lost

123_567

The lost information, the computer is very easy to fill. For the human brain, it is also very easy to fill

Again, if there is now a slightly more complex sentence

Little monkey can not find the father and mother, very anxious.

One word is lost

Little monkey can ___ find the father and mother, very anxious

If the audio digital filter try to fill it, will be a strange result like this

Little monkey can can find the father and mother, very anxious

But for the human brain, the correct fill this information is not a bit difficult, the human brain analyze the rest of those messages to understand the meaning of the whole sentence should have, so that the correct fill that missing information.

Therefore, the conclusion comes out, for simple and monotonous information, the existing calculation method can be used to fill it correctly. However, the more complex information, the more the existing calculation method will fill in the wrong information. The human brain, is much more powerful in this case.

For the missing information, the traditional audio industry believe “there is always better than nothing”, but this is actually a wrong direction. For people, ” rather go without than have something shoddy “, a wrong information is far more harmful than no information. Without this information, our brain can make up. But if there is a wrong information will be misleading, and also lost the opportunity to make up by human brain. This is like a pot of soup lack a piece of meat, that may not be a big deal, but if you make up a dead rat into the soup. Rats are also meat, for measurement specs, a pot of soup that maked up enough of the meat, is a better result.

Measurement and listening

Here, we can see that, for audio, we should understand that the audio industry’s existing measurement spec. can not correspond to people’s listening experience. We are not able to use those number to quantify our listening pleasure. These spec tend to be analyzed from the completeness of the content. However, this is not the case for human feelings. Complete information, but the order of the chaos will have disastrous consequences. Likewise, more information is not meant to be better than less information, and a well-designed non-oversampling DAC, we will find richer and more natural details.

2 is greater than 1, even primary school students know it, but more advanced wisdom is to know how to do correct trade-off. Less is More

Fantastic explanation! Your theories go beyond mathematics and encompass neuroscience as well. I really appreciate it. I have May KTE and love it! Thank you!